HA 概述

所谓HA(High Available),即⾼可⽤(7*24⼩时不中断服务)。

实现⾼可⽤最关键的策略是消除单点故障。Hadoop-HA严格来说应该分成各个组件的HA机制:HDFS的HA和YARN的HA。

Hadoop2.0之前,在HDFS集群中NameNode存在单点故障(SPOF)。

NameNode主要在以下两个⽅⾯影响HDFS集群

HDFS HA功能通过配置Active/Standby两个NameNodes实现在集群中对NameNode的热备来解决上述问题。如果出现故障,如机器崩溃或机器需要升级维护,这时可通过此种⽅式将NameNode很快的切换到另外⼀台机器。

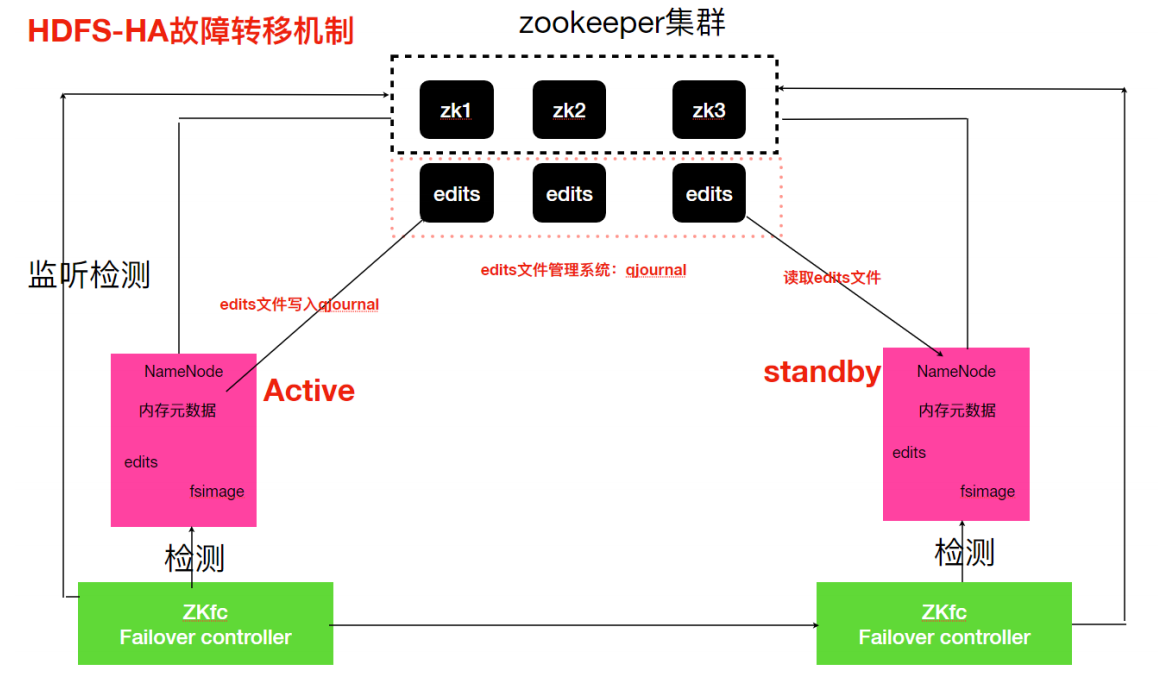

HDFS-HA ⼯作机制

通过双NameNode消除单点故障(Active/Standby)

HDFS-HA⼯作要点

元数据管理⽅式需要改变

需要⼀个状态管理功能模块

实现了⼀个zkfailover,常驻在每⼀个namenode所在的节点,每⼀个zkfailover负责监控⾃⼰所在NameNode节点,利⽤zk进⾏状态标识,当需要进⾏状态切换时,由zkfailover来负责切换,切换时需要防⽌brain split现象的发⽣(集群中出现两个Active的Namenode)。

必须保证两个NameNode之间能够ssh⽆密码登录

隔离(Fence),即同⼀时刻仅仅有⼀个NameNode对外提供服务

HDFS-HA⼯作机制

配置部署HDFS-HA进⾏⾃动故障转移。⾃动故障转移为HDFS部署增加了两个新组件:ZooKeeper和ZKFailoverController(ZKFC)进程,ZooKeeper是维护少量协调数据,通知客户端这些数据的改变和监视客户端故障的⾼可⽤服务。HA的⾃动故障转移依赖于ZooKeeper的以下功能:

ZKFC是⾃动故障转移中的另⼀个新组件,是ZooKeeper的客户端,也监视和管理NameNode的状态。每个运⾏NameNode的主机也运⾏了⼀个ZKFC进程,ZKFC负责:

健康监测

ZKFC使⽤⼀个健康检查命令定期地ping与之在相同主机的NameNode,只要该NameNode及时地回复健康状态,ZKFC认为该节点是健康的。如果该节点崩溃,冻结或进⼊不健康状态,健康监测器标识该节点为⾮健康的。

ZooKeeper会话管理

当本地NameNode是健康的,ZKFC保持⼀个在ZooKeeper中打开的会话。如果本地NameNode处于active状态,ZKFC也保持⼀个特殊的znode锁,该锁使⽤了ZooKeeper对短暂节点的⽀持,如果会话终⽌,锁节点将⾃动删除。

基于ZooKeeper的选择

如果本地NameNode是健康的,且ZKFC发现没有其它的节点当前持有znode锁,它将为⾃⼰获取该锁。如果成功,则它已经赢得了选择,并负责运⾏故障转移进程以使它的本地NameNode为Active。故障转移进程与前⾯描述的⼿动故障转移相似,⾸先如果必要保护之前的现役NameNode,然后本地NameNode转换为Active状态。

HDFS-HA集群配置

HDFS-HA官方文档:https://hadoop.apache.org/docs/stable/hadoop-project-dist/hadoop-hdfs/HDFSHighAvailabilityWithQJM.html

环境准备

修改IP

修改主机名及主机名和IP地址的映射

关闭防⽕墙

ssh免密登录

安装JDK,配置环境变量等

集群规划

Linux121

Linux122

Linux123

NameNode

NameNode

JournalNode

JournalNode

JournalNode

DataNode

DataNode

DataNode

ZK

ZK

ZK

ResourceManager

NodeManager

NodeManager

NodeManager

配置HDFS-HA集群

停⽌原先HDFS集群

1 [root@Linux121 ~]# stop-dfs.sh

在所有节点,/opt/lagou/servers⽬录下创建⼀个ha⽂件夹

1 2 3 [root@Linux121 ~]# mkdir /opt/lagou/servers/ha [root@Linux122 ~]# mkdir /opt/lagou/servers/ha [root@Linux123 ~]# mkdir /opt/lagou/servers/ha

将/opt/lagou/servers/⽬录下的 hadoop-2.9.2拷⻉到ha⽬录下

1 [root@Linux121 ~]# cp -r /opt/lagou/servers/hadoop-2.9.2 /opt/lagou/servers/ha

删除原集群data⽬录

1 [root@Linux121 ~]# rm -rf /opt/lagou/servers/ha/hadoop-2.9.2/data

配置hdfs-site.xml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 [root@Linux121 ~]# cd /opt/lagou/servers/ha/hadoop-2.9.2/etc/hadoop [root@Linux121 hadoop]# vim hdfs-site.xml <configuration> <property> <name>dfs.nameservices</name> <value>lagoucluster</value> </property> <property> <name>dfs.ha.namenodes.lagoucluster</name> <value>nn1,nn2</value> </property> <property> <name>dfs.namenode.rpc-address.lagoucluster.nn1</name> <value>Linux121:9000</value> </property> <property> <name>dfs.namenode.rpc-address.lagoucluster.nn2</name> <value>Linux122:9000</value> </property> <property> <name>dfs.namenode.http-address.lagoucluster.nn1</name> <value>linux121:50070</value> </property> <property> <name>dfs.namenode.http-address.lagoucluster.nn2</name> <value>linux122:50070</value> </property> <property> <name>dfs.namenode.shared.edits.dir</name> <value>qjournal://Linux121:8485;Linux122:8485;Linux123:8485/lagou</value> </property> <property> <name>dfs.client.failover.proxy.provider.lagoucluster</name> <value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value> </property> <property> <name>dfs.ha.fencing.methods</name> <value>sshfence</value> </property> <property> <name>dfs.ha.fencing.ssh.private-key-files</name> <value>/root/.ssh/id_rsa</value> </property> <property> <name>dfs.journalnode.edits.dir</name> <value>/opt/journalnode</value> </property> <property> <name>dfs.ha.automatic-failover.enabled</name> <value>true</value> </property> </configuration>

配置core-site.xml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 [root@Linux121 hadoop]# vim core-site.xml <configuration> <property> <name>fs.defaultFS</name> <value>hdfs://lagoucluster</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/opt/lagou/servers/ha/hadoop-2.9.2/data/tmp</value> </property> <property> <name>ha.zookeeper.quorum</name> <value>Linux121:2181,Linux122:2181,Linux123:2181</value> </property> </configuration>

拷⻉配置好的hadoop环境到其他节点

1 rsync-script /opt/lagou/servers/ha/hadoop-2.9.2/

启动HDFS-HA集群

启动zookeeper集群,并查看状态

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 [root@Linux123 ~]# zk.sh start start zookeeper server... ZooKeeper JMX enabled by default Using config: /opt/lagou/servers/zookeeper-3.4.14/bin/../conf/zoo.cfg Starting zookeeper ... STARTED ZooKeeper JMX enabled by default Using config: /opt/lagou/servers/zookeeper-3.4.14/bin/../conf/zoo.cfg Starting zookeeper ... STARTED ZooKeeper JMX enabled by default Using config: /opt/lagou/servers/zookeeper-3.4.14/bin/../conf/zoo.cfg Starting zookeeper ... STARTED [root@Linux123 ~]# zk.sh status start zookeeper server... ZooKeeper JMX enabled by default Using config: /opt/lagou/servers/zookeeper-3.4.14/bin/../conf/zoo.cfg Mode: follower ZooKeeper JMX enabled by default Using config: /opt/lagou/servers/zookeeper-3.4.14/bin/../conf/zoo.cfg Mode: leader ZooKeeper JMX enabled by default Using config: /opt/lagou/servers/zookeeper-3.4.14/bin/../conf/zoo.cfg Mode: follower

在各个JournalNode节点上,输⼊以下命令启动journalnode服务(去往HA安装⽬录,不要使⽤环境变量中命令)

1 2 3 4 5 6 7 8 [root@Linux121 hadoop]# /opt/lagou/servers/ha/hadoop-2.9.2/sbin/hadoop-daemon.sh start journalnode starting journalnode, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-journalnode-Linux121.out [root@Linux122 ~]# /opt/lagou/servers/ha/hadoop-2.9.2/sbin/hadoop-daemon.sh start journalnode starting journalnode, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-journalnode-Linux122.out [root@Linux123 ~]# /opt/lagou/servers/ha/hadoop-2.9.2/sbin/hadoop-daemon.sh start journalnode starting journalnode, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-journalnode-Linux123.out

在[nn1]上,对其进⾏格式化,并启动

1 2 3 [root@Linux121 hadoop]# /opt/lagou/servers/ha/hadoop-2.9.2/bin/hdfs namenode -format [root@Linux121 hadoop]# /opt/lagou/servers/ha/hadoop-2.9.2/sbin/hadoop-daemon.sh start namenode

在[nn2]上,同步nn1的元数据信息

1 [root@Linux122 ~]# /opt/lagou/servers/ha/hadoop-2.9.2/bin/hdfs namenode -bootstrapStandby

在[nn1]上初始化zkfc

1 [root@Linux121 hadoop]# /opt/lagou/servers/ha/hadoop-2.9.2/bin/hdfs zkfc -formatZK

在[nn1]上,启动集群

1 2 3 4 5 6 7 8 9 10 11 12 13 14 [root@Linux121 hadoop]# /opt/lagou/servers/ha/hadoop-2.9.2/sbin/start-dfs.sh Starting namenodes on [Linux121 Linux122] Linux122: starting namenode, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-namenode-Linux122.out Linux121: namenode running as process 33584. Stop it first. Linux123: starting datanode, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-datanode-Linux123.out Linux122: starting datanode, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-datanode-Linux122.out Linux121: starting datanode, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-datanode-Linux121.out Starting journal nodes [Linux121 Linux122 Linux123] Linux121: journalnode running as process 30749. Stop it first. Linux123: journalnode running as process 30055. Stop it first. Linux122: journalnode running as process 30072. Stop it first. Starting ZK Failover Controllers on NN hosts [Linux121 Linux122] Linux122: starting zkfc, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-zkfc-Linux122.out Linux121: starting zkfc, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-zkfc-Linux121.out

验证

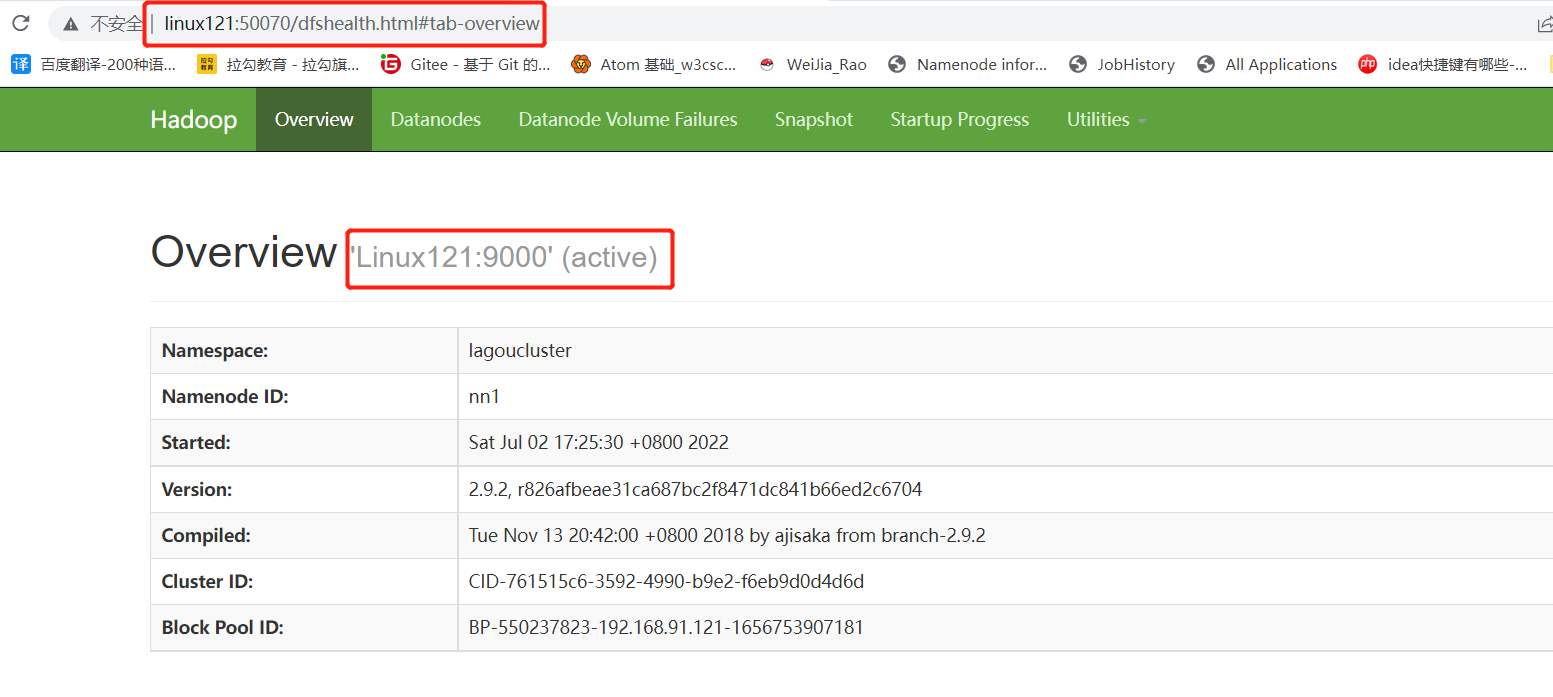

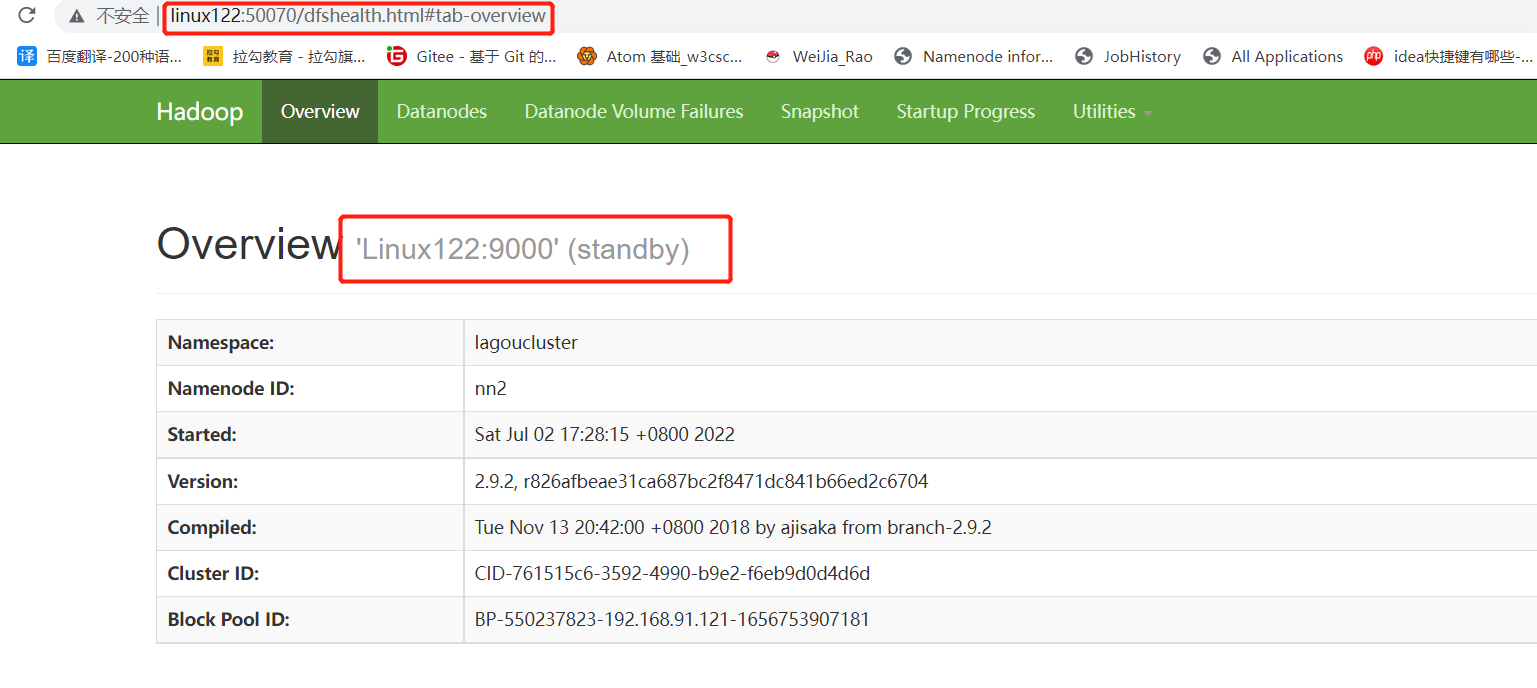

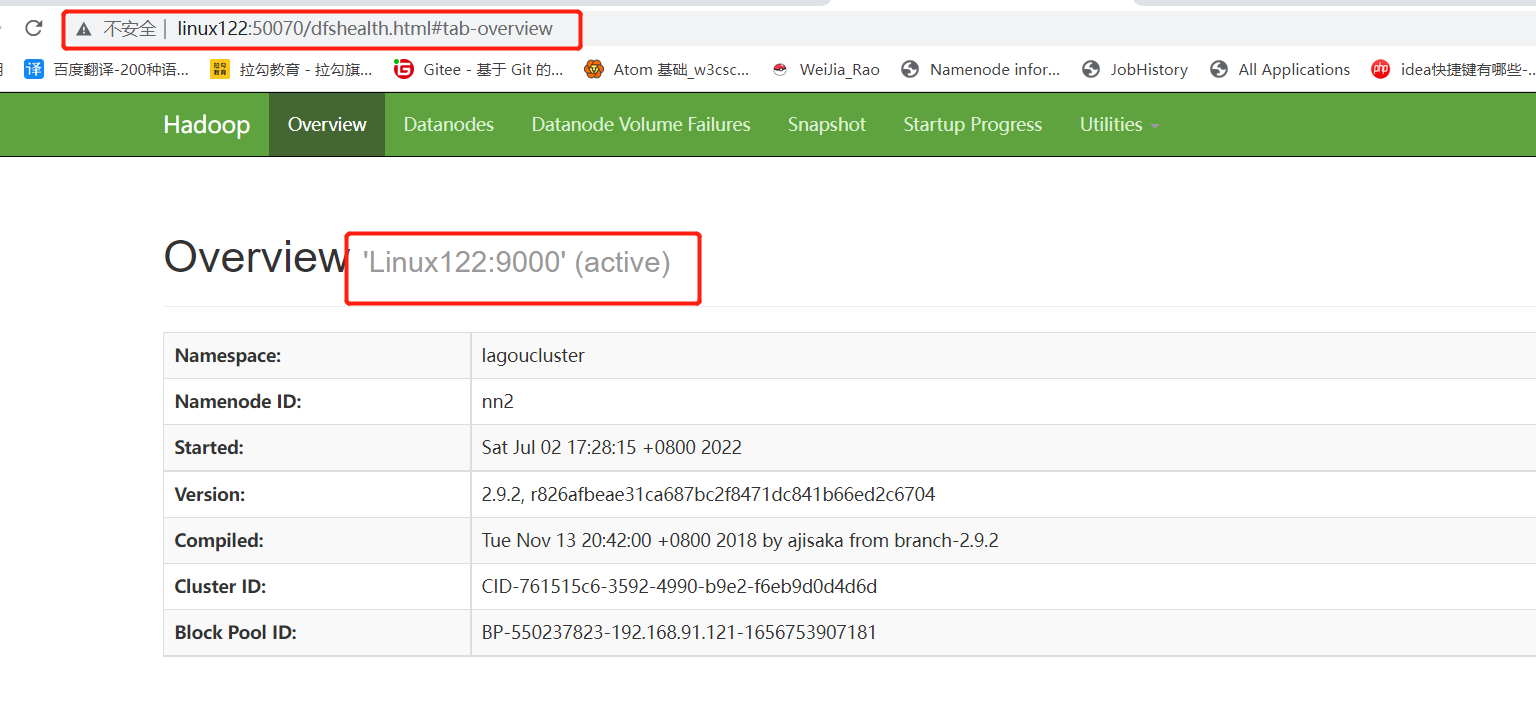

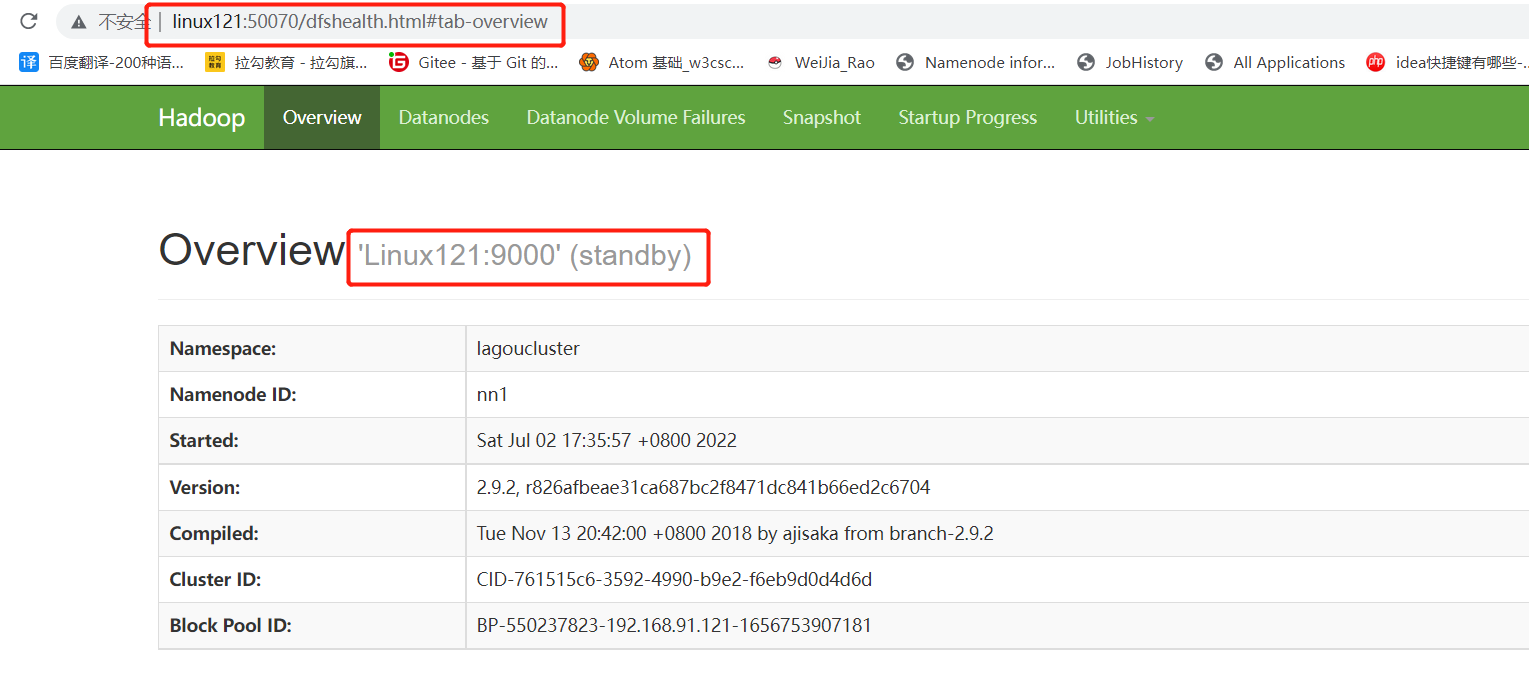

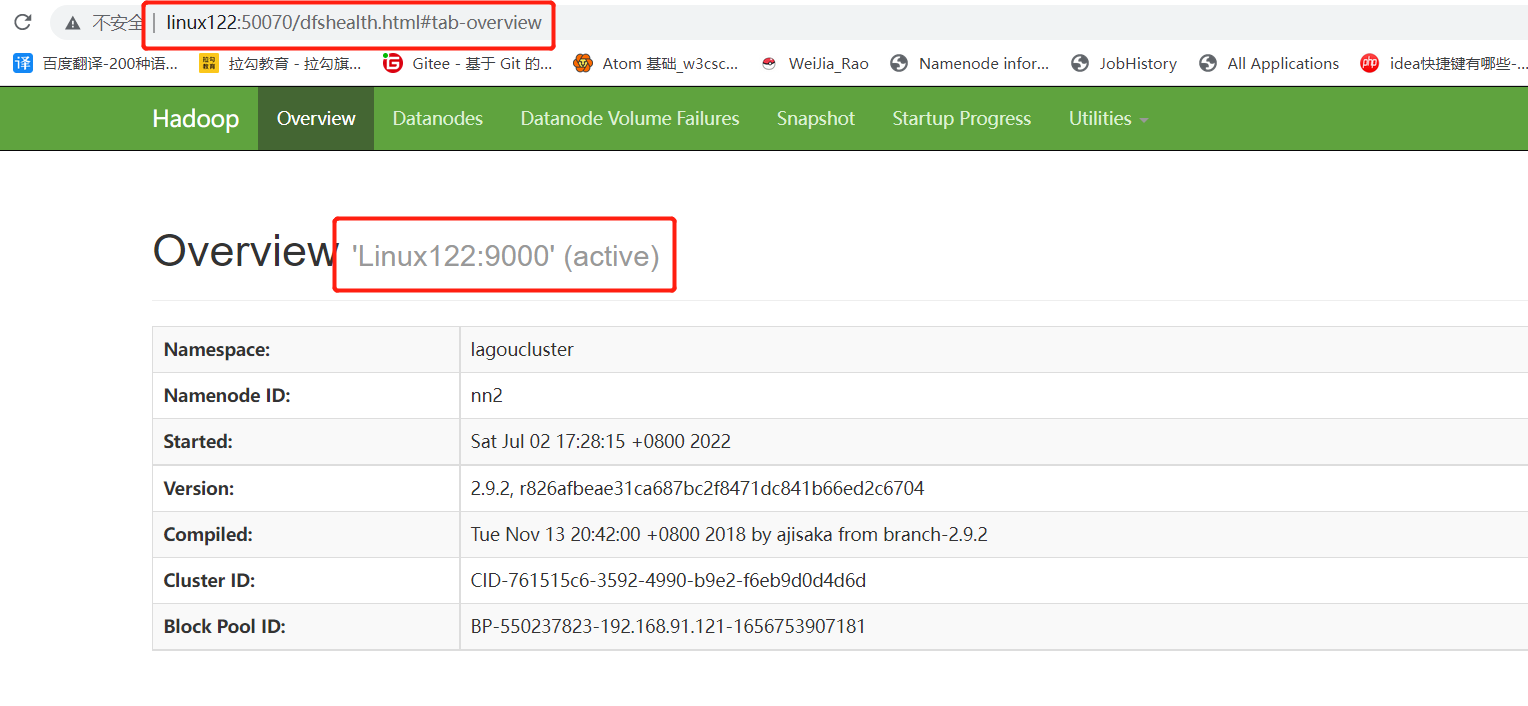

访问http://linux121:50070/和http://linux122:50070/,如下图:

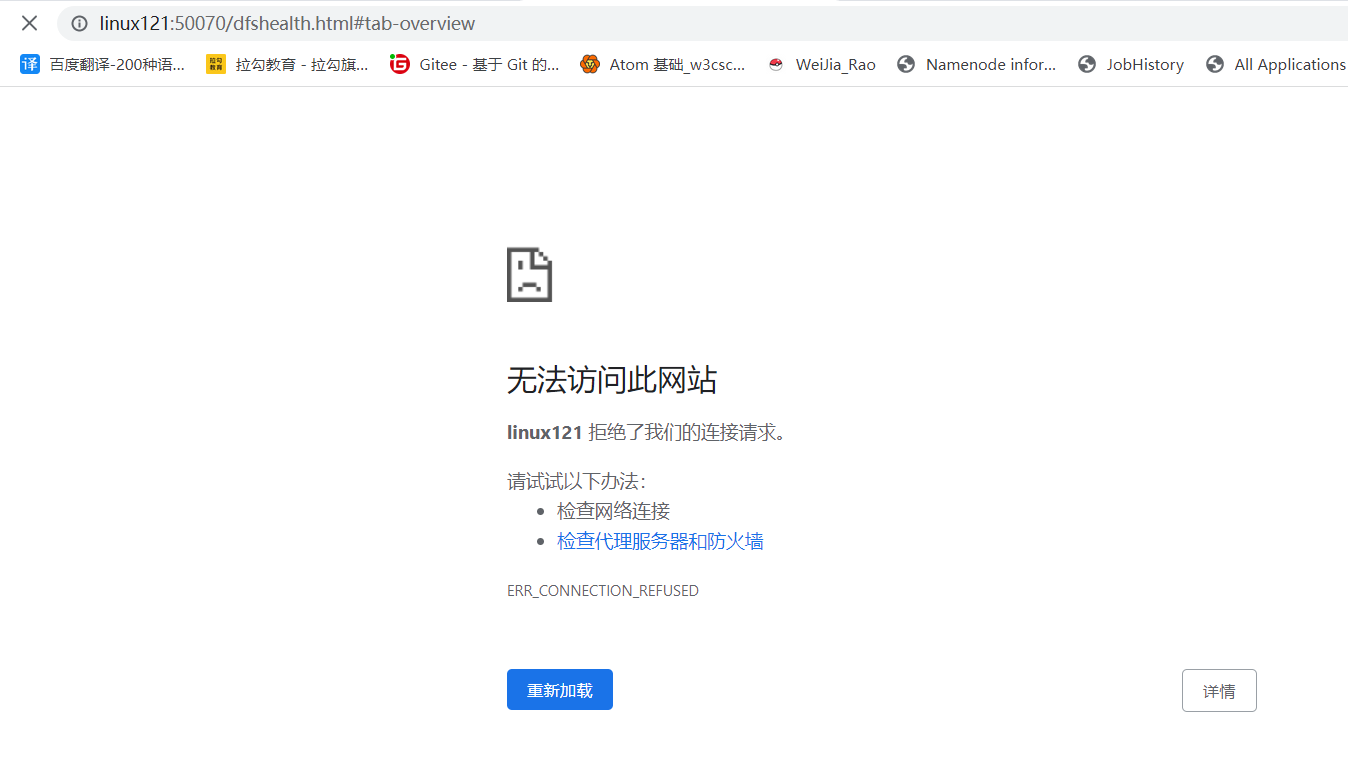

将Active NameNode进程kill,查看将 Active 和 Standby NameNode是否变化

重新启动原先Active NameNode,查看变化

1 2 [root@Linux121 hadoop]# /opt/lagou/servers/ha/hadoop-2.9.2/sbin/hadoop-daemon.sh start namenode starting namenode, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/hadoop-root-namenode-Linux121.out

YARN-HA配置

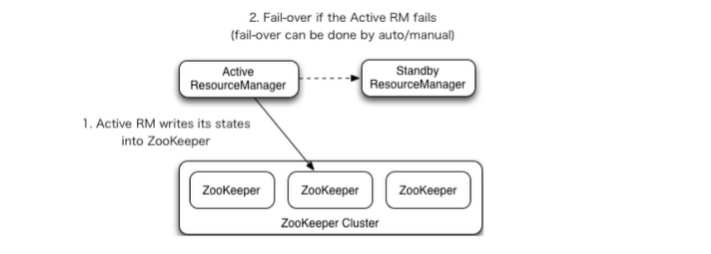

YARN-HA⼯作机制

YARN-HA官方文档:https://hadoop.apache.org/docs/stable/hadoop-yarn/hadoop-yarn-site/ResourceManagerHA.html

环境准备

修改IP

修改主机名及主机名和IP地址的映射

关闭防⽕墙

ssh免密登录

安装JDK,配置环境变量等

配置Zookeeper集群

规划集群

Linux121

Linux122

Linux123

NameNode

NameNode

JournalNode

JournalNode

JournalNode

DataNode

DataNode

DataNode

ZK

ZK

ZK

ResourceManager

NodeManager

NodeManager

NodeManager

配置 YARN-HA 集群

停⽌原先 YARN 集群

1 [root@Linux123 ~]# stop-yarn.sh

配置yarn-site.xml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 [root@Linux121 ~]# cd /opt/lagou/servers/ha/hadoop-2.9.2/etc/hadoop [root@Linux121 hadoop]# vim yarn-site.xml <configuration> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <!--启⽤resourcemanager ha--> <property> <name>yarn.resourcemanager.ha.enabled</name> <value>true</value> </property> <!--声明两台resourcemanager的地址--> <property> <name>yarn.resourcemanager.cluster-id</name> <value>cluster-yarn</value> </property> <property> <name>yarn.resourcemanager.ha.rm-ids</name> <value>rm1,rm2</value> </property> <property> <name>yarn.resourcemanager.hostname.rm1</name> <value>linux122</value> </property> <property> <name>yarn.resourcemanager.hostname.rm2</name> <value>linux123</value> </property> <!--指定zookeeper集群的地址--> <property> <name>yarn.resourcemanager.zk-address</name> <value>Linux121:2181,Linux122:2181,Linux123:2181</value> </property> <!--启⽤⾃动恢复--> <property> <name>yarn.resourcemanager.recovery.enabled</name> <value>true</value> </property> <!--指定resourcemanager的状态信息存储在zookeeper集群--> <property> <name>yarn.resourcemanager.store.class</name> <value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value> </property> </configuration>

同步更新其他节点的配置信息

1 [root@Linux121 hadoop]# rsync-script yarn-site.xml

启动yarn集群

1 2 3 4 5 6 [root@Linux123 ~]# /opt/lagou/servers/ha/hadoop-2.9.2/sbin/start-yarn.sh starting yarn daemons starting resourcemanager, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/yarn-root-resourcemanager-Linux123.out Linux123: starting nodemanager, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/yarn-root-nodemanager-Linux123.out Linux122: starting nodemanager, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/yarn-root-nodemanager-Linux122.out Linux121: starting nodemanager, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/yarn-root-nodemanager-Linux121.out

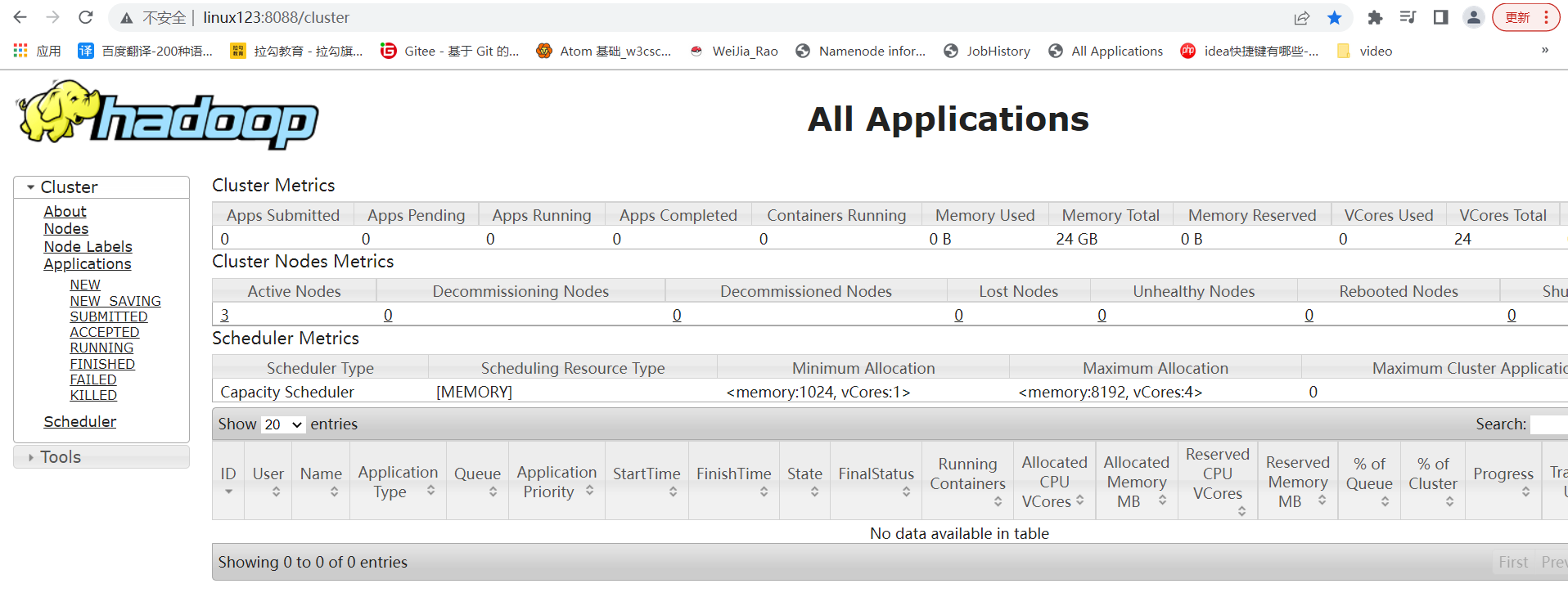

访问http://linux123:8088/是否正常,如下:

启动Linux122上的resourcemanager,

1 2 [root@Linux122 ~]# /opt/lagou/servers/ha/hadoop-2.9.2/sbin/yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/yarn-root-resourcemanager-Linux122.out

访问http://linux122:8088/会跳转到http://linux123:8088/,说明active的是Linux123

杀死Linux123上的resourcemanager

1 2 3 4 5 6 7 8 9 10 11 [root@Linux123 ~]# jps 64738 ResourceManager 64883 NodeManager 6595 -- process information unavailable 35926 DataNode 30055 JournalNode 26458 QuorumPeerMain 75674 Jps 6590 -- process information unavailable [root@Linux123 ~]# kill -9 64738

访问http://linux122:8088/是正常的并且不会跳转到http://linux123:8088/,而http://linux123:8088/是无法访问的

启动Linux123上的resourcemanager

1 2 [root@Linux123 ~]# /opt/lagou/servers/ha/hadoop-2.9.2/sbin/yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /opt/lagou/servers/ha/hadoop-2.9.2/logs/yarn-root-resourcemanager-Linux123.out

访问http://linux123:8088/会跳转到http://linux122:8088/,说明active的是Linux122